Agentic AI is software that can work toward a goal instead of only returning an answer. In practice, that means it can gather context, decide what to do next, use tools, take actions across systems, and adjust when conditions change. The practical difference is not that it is magically fully autonomous. The difference is that it can carry more of the workflow between a user request and a finished outcome.

For business teams, the useful question is not whether agentic AI is real. It is which tasks deserve more autonomy, where humans must stay in control, and what architecture keeps the system useful without letting it go off the rails.

What makes a system agentic

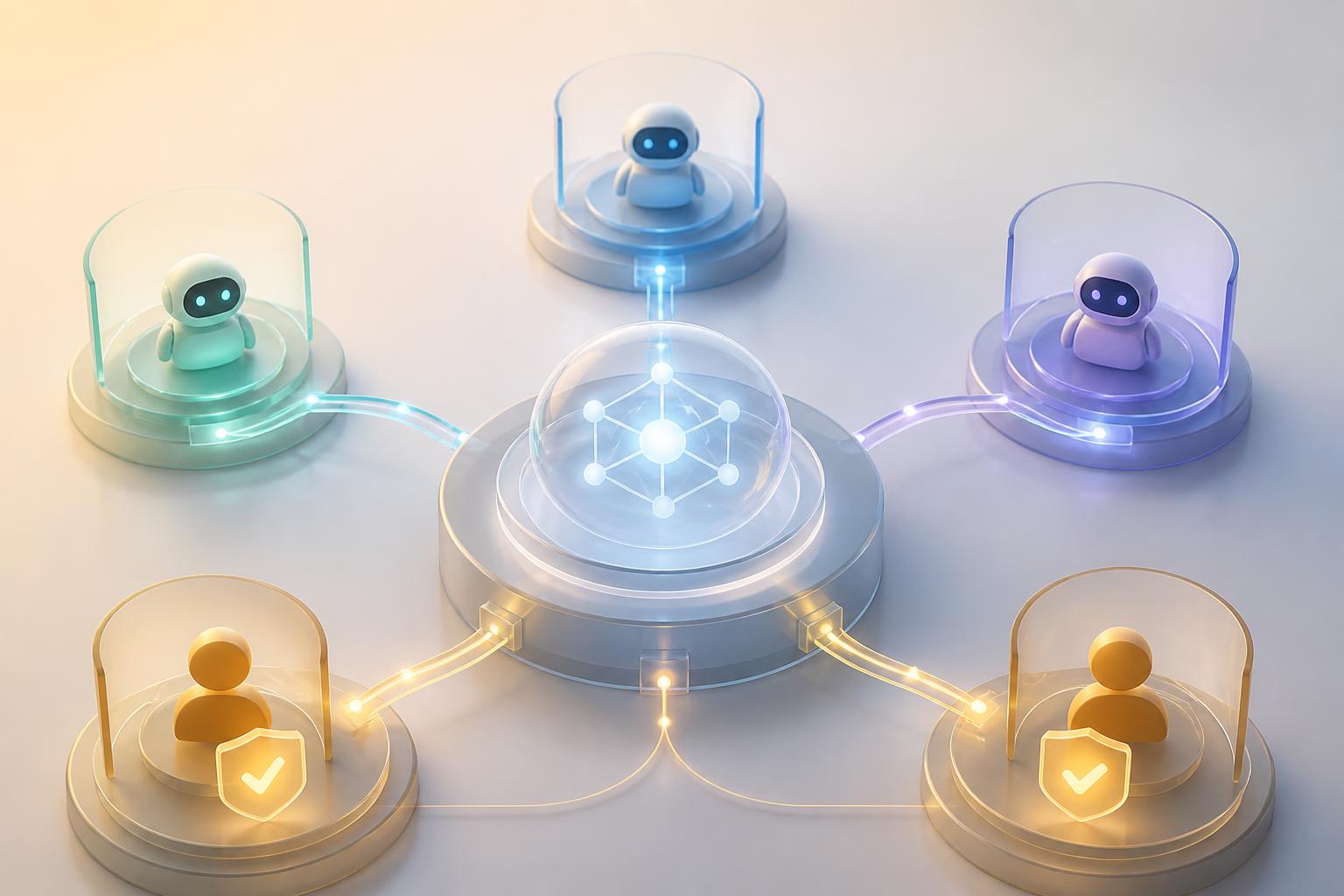

A system becomes agentic when it does more than generate content on demand. A normal chatbot waits for a prompt and replies. An agentic system can pursue a defined objective across multiple steps.

That usually means four things are present at the same time:

- A goal: the system is trying to complete an outcome, not just produce text.

- Decision-making: it chooses the next step based on context and intermediate results.

- Tool use: it can query data, call APIs, update systems, or trigger workflows.

- Adaptation: it can revise its plan when the first path fails, new information arrives, or a human changes the constraint.

This is why agentic AI is best understood as a workflow pattern, not just a model feature. The model may provide reasoning, but the real system also needs tools, memory, permissions, logging, and operating rules.

Agentic AI is not the same as “no humans involved”

One of the biggest mistakes in the market is treating agentic AI as synonymous with total autonomy. Many of the best production systems are only partially autonomous. They gather context, draft actions, and move work forward, but pause for approval on high-risk steps like sending money, changing contracts, deleting records, or contacting customers.

That design is usually stronger than chasing maximum autonomy. Good systems earn trust by being reliable inside clear boundaries.

How the agentic loop works in practice

Most agentic systems follow a repeating operating loop, even if the implementation details vary by framework.

- Perceive: collect relevant inputs from users, documents, APIs, databases, events, or software systems.

- Reason: interpret the situation, decide what matters, and create or revise a plan.

- Act: use tools to perform the next step, such as retrieving a record, drafting a response, opening a ticket, or updating a system.

- Check and continue: inspect the result, log what happened, decide whether the goal is complete, and either stop, escalate, or continue.

A customer support example makes this concrete. A non-agentic assistant might answer a shipping question from a knowledge base. An agentic support workflow could verify the order, check shipment status, draft a reply, offer the next best action, and route the case to a human if the refund amount or policy exception crosses a threshold.

An internal operations example looks similar. An employee asks for onboarding help. The system checks the role, creates required tickets, retrieves policy requirements, proposes a start-date checklist, and asks a manager for approval before provisioning sensitive access.

The important point is that the system is not just speaking. It is moving work forward.

Choose the lowest useful level of autonomy

Not every business problem needs a highly autonomous agent. In many cases, a low- or medium-agency workflow is the better design because it is easier to test, cheaper to run, and simpler to govern.

Pick the lowest useful level of autonomy

| Pattern | How it behaves | Best fit |

|---|---|---|

| Assistant | Answers questions or drafts content but does not take actions | Knowledge help, drafting, search, summarization |

| Guided workflow | Uses tools and follows a mostly fixed path with approvals at key steps | Support triage, intake, routing, policy-heavy internal operations |

| Agentic workflow | Plans, acts, retries, and adapts inside defined boundaries | Multi-step business processes with measurable outcomes and clear rollback paths |

| High-autonomy multi-agent system | Specialized agents coordinate across subtasks with limited human intervention | Complex, high-volume workflows that justify orchestration overhead |

If a workflow is simple, repetitive, and already well-defined, ordinary automation may be enough. If the task changes based on context, requires judgment between several possible actions, or benefits from dynamic tool selection, agentic AI becomes more useful.

Good early use cases

- Customer support workflows that combine knowledge retrieval, status checks, and escalation rules

- Internal help desk triage across HR, IT, and operations tools

- Research and preparation work where the system gathers evidence, drafts recommendations, and hands off to a human decision-maker

- Incident response support that collects logs, summarizes probable causes, and proposes next actions

Bad early use cases

- Workflows with unclear ownership or no success metric

- Processes that lack API access or dependable source data

- High-risk actions with no approval gates, audit trail, or rollback plan

- Problems that are really just simple rules masquerading as “agentic” projects

How to implement agentic AI without losing control

The safest rollout pattern is not “turn on autonomy and hope.” It is controlled expansion.

- Start with one narrow workflow. Choose a process with high friction, clear boundaries, and a measurable outcome such as resolution time, handoff rate, or ticket backlog reduction.

- Define tool boundaries early. Decide exactly which systems the agent can read from, write to, or trigger. Least privilege matters here.

- Separate low-risk and high-risk actions. Reading data, drafting outputs, or creating suggestions can often be automated earlier than payments, permissions, customer commitments, or destructive actions.

- Add human approvals where the downside is real. A human-in-the-loop step should exist because the action is consequential, not because the team is nervous in general.

- Instrument the workflow. Log tool calls, state changes, retries, failures, escalations, and final outcomes. If you cannot inspect what happened, you do not have a production-ready agentic system.

- Expand autonomy only after evidence. Let the system earn more freedom by proving accuracy, consistency, and recoverability over time.

Prerequisites matter more than most teams expect. Clean source data, stable APIs, role-based permissions, escalation paths, and process ownership usually matter more than choosing a fashionable framework.

Common mistakes that make agentic projects fail

1. Confusing a demo with an operating model

A demo can look impressive with one prompt and one happy path. Production requires exception handling, retries, observability, identity, approval logic, and containment when the workflow goes wrong.

2. Giving the system too much freedom too early

More autonomy is not automatically better. If the workflow can be solved with structured steps and selective judgment, use that. Overdesigned autonomy creates testing and governance problems faster than it creates value.

3. Treating prompt quality as the whole problem

Prompts matter, but production quality usually breaks on bad data, weak permissions, missing logs, brittle integrations, and unclear business rules.

4. Using multiple agents when one will do

Multi-agent systems add coordination overhead. Use them when specialization clearly improves quality or throughput, not because the architecture diagram looks more advanced.

5. Skipping evaluation

You need a way to judge whether the system made the right call, used the right tool, stayed inside policy, and improved the business metric you care about. Otherwise the project becomes a belief system, not an operational asset.

A practical checklist before rollout

- Define the workflow outcome in one sentence

- Name the system owner and escalation owner

- List every tool the system can use and the permissions each one needs

- Mark which actions require human approval

- Set three success metrics and one stop condition

- Log every tool call, retry, handoff, and final outcome

- Test common edge cases before opening access broadly

- Start with a lower-stakes environment before expanding scope

Agentic AI matters because it moves AI from response generation toward workflow execution. But the practical win is not “full autonomy.” The practical win is building systems that can handle more of the work while humans keep control over the decisions that actually carry risk.

If you keep that principle in view, agentic AI becomes much easier to evaluate. Start with the business process, choose the lowest useful level of autonomy, add clear boundaries, and only expand from there.